Thinking of a career in machine learning? It is emerging as one of the hottest fields today. Are you a technology enthusiast, but have no idea where to start? Well, here it is.

Machine learning is so relevant today that it probably pervades through levels we are unaware of, while using it dozens of times a day without knowing it. In the last decade, ML has given us effective web search, practical speech recognition, self-driving cars, and a vast understanding of the human genome. Machine learning is a core field for implementing artificial intelligence (AI)

Dedicated machine learning courses today make you learn about the theoretical underpinnings of it and also ensure that you gain the practical know-how needed to apply these theories to new-age technical hassles.

Topics covered

Machine Learning – Introduction

Machine learning (ML) is the technology of enabling computers to function without being explicitly programmed. It focuses on building computing devices that can access data and use it themselves to learn through pattern recognition.

Machine learning uses statistical techniques to give computer programs (also called software agents) the ability to learn from past experiences and improve how they perform specific tasks. Machine learning is a facilitator of artificial intelligence. ML is one of the basic tools in AI.

“An AI god will emerge by 2042 and write its own bible. Will you worship it?”

VentureBeat (American technology website)

The process of such self-designed learning begins with observations or data. Such observations or data can be examples, instruction, or direct experience. ML enabled devices look for patterns and make better decisions (gives us better results) in the future based on the examples that we had provided initially before it began its process of learning. The original objective of ML was to enable the computer to learn automatically without human intervention or assistance and adjust actions (provide us results) accordingly. For example, making them understand the meaning of text (mimicking the human ability through semantics analysis) rather than them reading it as a sequence of keywords.

Machine learning – Some Predominant Methods in Use Today

- Supervised ML algorithms

- Unsupervised ML algorithms

- Semi-supervised ML algorithms

- Reinforcement ML algorithms

#1 Supervised Machine Learning

Machines are given training datasets which they can refer to whenever they are fed an input. All data are labeled meaning they are modeled & mapped to a fixed set of guiding instructions.

All supervised learning algorithms are pre-provided with the ground truth which is serves as the training dataset or the fixed set of guiding instructions.

Say suppose, you want a machine (or a computer whatever) to recognize a ‘crocodile’ from its picture. It has to have a pre-installed set of instructions that can enable it to classify the new “input” (a picture of a crocodile) as a “crocodile”. For example, we can build a training dataset supplemented with 20000 images of crocodiles and 20000 more which are not of crocodiles.

Y= f(X); where y is the output and X is the input

‘X’ (in this case a picture of a crocodile) will be analyzed with respect to a known training dataset. Then ‘X’ is used to make predictions about the output and give answer as ‘crocodile’ (Y).

Supervised ML can also compare its output with the correct, intended output in order to find errors and modify accordingly. If a correct output gets generated (for e.g. this time, a crocodile), this experience is used to predict similar output when exposed to new input data in the future too. This is basically learning from experience or the essence of machine learning.

Another example of supervised learning can be, say suppose, when you train your computer to filter spam messages based on previously received information. This is such a common experience of ML but generally neglected as too minor.

Supervised ML always depends on a pre-fed training dataset and its goal is to give an output for every new input data. The output (in the above case “crocodile”) is dependent on a set of instructions that has already being programmed into the computer.

The resulting output ‘y’ can be a category (for example, ‘crocodile’ in the above case) or a real value such as ‘dollars’ or ‘weight’.

If output ‘y’ is a category, supervised ML algorithms are called classification problems.

If output ‘y’ is a real value, supervised ML algorithms are called regression problems.

Supervised ML (Points to Remember):

- Good examples are required to conceive and build the training dataset

- If the training dataset is incorrect, the ML algorithm will learn incorrectly which can bring losses

- Computation time is enormously large

#2 Unsupervised Machine Learning

Unsupervised ML algorithms have only input data (X) and no corresponding output variables. The system doesn’t have to figure one right output. Instead it explores the data and draws inferences from datasets.

Unlike supervised learning above there are no correct answers and there is no teacher (or training dataset as in the case of supervised). There are no labeled data. Algorithms are left to discover and present interesting structures in the data we provide as the input.

There are two further classifications in this case as well:

- Clustering – discover the inherent groupings in data.

Such as grouping buyers or customers by their purchasing history

- Association – discover rules that describe large portions of the data.

Such as people that buy X almost always tend to buy Y or suppose prediction of Indian students’ performance on mathematics class in high schools

#3 Semi-supervised Machine Learning

These days, most learning algorithms are a mix of supervised and unsupervised. In reality, it is too expensive and often unrealistic to obtain labels/target value for all the data you have. Semi-supervised algorithms use both labeled and unlabeled data (both supervised & unsupervised), when certain assumptions apply. Typically, it is a small amount of labeled data and a large amount of unlabeled data.

In short, the nature of a problem determines whether it requires a supervised approach or an unsupervised approach. Some problems will require you to have pre-settled labeled data in order to train your learning algorithm, and some will not. Having labeled data or not having any labeling will NOT change the nature of the problem you’re trying to solve.

#4 Reinforcement Machine Learning

This type of algorithms interacts with its environment through actions and is rewarded on its performance (or bashed for going wrong). There can be positive reinforcement (resulting in rewards) or negative reinforcement.

For e.g., as a toddler, when you were taught to walk without holding on to anything and you succeeded, you were given candies or chocolate (or maybe just smiles, claps and cuddles!) and you knew you did it right. You never forgot how to walk independently after that. You kept doing the same thing the right way. So you knew, that was the right way because you were rewarded the first time.

Reinforcement algorithms work somewhat like that.

There is no labeling in this kind of learning, nor does it involve pattern recognition or grouping etc. You model your algorithm in a manner such that it interacts with its environment.

If your algorithm does a good job, you reward it else you punish (negative reinforcement). With continuous interactions and learning, it goes from being bad to being the best and solves the problem assigned to it.

For e.g., think of the suggestions that appear as drop-down while you type into Google (or on your keypad of your phone maybe). You get suggestions that are derived from your browsing history as well as a combination of related terms. These ‘recommender’ algorithms are a mix of reinforcement and supervised learning methods. Examples also include YouTube/ Netflix/ Prime suggested videos on your regular feed tagged as ‘Videos you may like’.

This method allows software agents in computing devices to automatically determine ‘an ideal behavior’ in order to maximize its performance. Simple reward feedback is required for it to learn which action or behavior or output is best. The reward is known as the reinforcement signal. For e.g. if you want to train a robot to walk on a line, you can develop your algorithm that will take the distance walked on the line as reward. Therefore, as much as the robot walks exactly on the line, he would achieve maximum reward. In reality, the reward is a much more complicated polynomial function and typically not simply a measurement value.

Machine Learning Courses – How to Make a Start?

To get into this field, you must complete Class 11-12 with Physics, Chemistry, and Mathematics or with Physics, Chemistry, and Biology or with Physics, Chemistry, Mathematics, and Biology. You may also complete Class 11-12 in any stream with Mathematics or in Humanities stream with Psychology.

Then depending upon your educational background in Class 11-12, you can do a Bachelor’s / Bachelor’s and Master’s/ Bachelor’s, Master’s, Doctoral degree in any of the following academic fields.

Remember that all these fields may not be available in all the three levels, i.e. in Bachelor’s, Master’s, and Doctoral.

- Machine Learning

- Machine Learning and Autonomous Systems

- Machine Learning and Machine Intelligence

- Advanced Computing

- AI & Deep Learning

- Applied Artificial Intelligence

- Applied Mathematics

- Applied Statistics

- Artificial Intelligence

- Artificial Intelligence & Machine Learning

- Cloud Computing

- Cognitive Sciences

- Communication and Computer Engineering

- Computational Neuroscience

- Computational Sciences & Engineering

- Computer Networks

- Computer Networks & Information Security

- Computer Science & Engineering

- Computer Vision

- Cybersecurity & Artificial Intelligence

- Data Science

- Data Science & Artificial Intelligence

- Data Science & Engineering

- Electronics and Computer Engineering

- Embedded Systems and VLSI Design

- High Performance Computing and Cloud Technologies

- Human-Centered Big Data & Artificial Intelligence

- Information Science & Engineering

- Internet of Things

- Mathematical Statistics

- Mathematics

- Mathematics and Computer Science

- Mathematics and Statistics

- Operations Research

- Programming and Software Engineering

- Psychology and Neuroscience

- Quantum Computing

- Robotics

- Statistics

- Statistics and Computer Science

Machine Learning Courses – Online

- Machine Learning Course by Stanford University (Coursera) – Created by Andrew Ng, Co-Founder of Coursera and Professor at Stanford University

- Deep Learning Course (deeplearning.ai) Coursera – brought to you in association with Stanford Professors and NVIDIA

- Machine Learning Course A-Z™: Hands-On Python & R In Data Science (Udemy) – developed by Kirill Eremenko

- Machine Learning Data Science Course (Harvard University) – Professional Certification Program on edX

- Machine Learning – Artificial Intelligence Course (Columbia University) – edX

- Machine Learning Course (Stanford School of Engineering) – Stanford ONLINE

Machine Learning & AI

- Stanford University’s Machine Learning on Coursera

- The University of Washington’s Machine Learning Specialization (Coursera)

- Columbia University’s Machine Learning for Data Science and Analytics on EdX

- Artificial Intelligence for Robotics by Georgia Tech Masters on Udacity

- AI For Everyone by IBM on Coursera

- The University of New South Wales’ Designing the Future of Work on Coursera

- Georgia Tech’s Machine Learning on Udacity

- Intro to Artificial Intelligence by Georgia Tech Masters on Udacity

Python & ML

- Computer Science & Programming Using Python by MITx on EdX

- Statistics With Python Specialization by the University of Michigan on Coursera

- Machine Learning Ethics by the University of Michigan on Coursera

- Rice University’s An Introduction to Interactive Programming in Python (Coursera)

- Python Data Structures by the University of Michigan on Coursera

- Python for ML by the University of California, San Diego on EdX

- Programming for Everybody (Getting Started with Python) by the University of Michigan on Coursera

- Python and Statistics for Financial Analysis by the Hong Kong University of Science and Technology on Coursera

- Complete Machine Learning Training with Python by the University of California, San Diego on EdX

- Problem Solving, Python Programming, and Video Games by the University of Alberta on Coursera

- University of Michigan’s Using Databases with Python on Coursera

Deep Learning

- Introduction to Deep Learning by National Research University Higher School of Economics on Coursera

- Deep Learning for Business by Yonsei University on Coursera

- Deep Learning with Tensorflow by IBM on EdX

- Deep Neural Networks with PyTorch by IBM on Coursera

- Computational Neuroscience by the University of Washington on Coursera

- Deep Learning in Computer Vision by National Research University Higher School of Economics on Coursera

- Deep Learning with Python and PyTorch by IBM on EdX

- Applied AI with Deep Learning by IBM on Coursera

If you are ready to take yourself to the next level, check out 5 more specializations and intermediate courses by top stakeholders in this industry:

- Machine Learning Using SAS Viya by SAS

- Applied AI: Artificial Intelligence with IBM Watson Specialization by IBM

- Python Data Products for Predictive Analytics Specialization by University of California, San Diego

- Tensorflow in Practice Specialization by deeplearning.ai

- Statistics with SAS by SAS

Two Excellent Book Companions

Find the links to these at the end of this post.

- Introduction to Statistical Learning, also available for Free online

- Hands-On Machine Learning with Scikit-Learn and TensorFlow, also available through a Safari subscription

Python Machine Learning

Even with 0 experiences in math or programming, diving into a career in machine learning is not a problem for anyone. It is pretty much enough to master one programming language (popularly Python, R or Octave for beginners) and to be able to use it confidently. A little bit of exposure to linear algebra at the start, although, would be great but not a necessity.

Python would be a perfect choice for any beginner. Python Machine Learning frameworks are minimalistic and intuitive with a full-featured pre-programmed library compilation which significantly reduces the time required to get your first results. Codes in Python are far shorter and crisp compared to C/C++.

Ask Andrew Ng, the masterclass specialist and the mind behind Baidu AI and Google Brain. His classes are the benchmark to grade all other courses and programs out in the market today. Stick with us a few more paragraphs to get an interesting link where you can practice your own approach to ML using Python (integrated development environment – IDE) after getting hands-on training with a beginner’s guide in the most basic Python machine learning algorithm.

Machine Learning vs. Deep Learning

Know that deep learning is machine learning. More technically, deep learning is considered an evolution of machine learning. The approaches to solve a problem are different in both the cases.

Example 1

For example, consider a flashlight that turns on when it recognizes the audible cue of someone saying the word “dark”. This is possible with ML algorithms. Now if the flashlight had a deep learning algorithm along with say a light sensor, it could turn itself on in the absence of light or when it hears phrases like ‘I can’t see’ or ‘there seems to be no light in here’ or such variations etc. without the direct cue of the word ‘dark’.

This, although, is a minor query. The real use of deep learning neural networks is on a much larger scale.

Example 2

Now say, you want a computer to recognize a ‘stop’ sign visually. In a ML based framework, you will need the computer to ‘see’ hundreds of thousands, even millions of images, until it gets the answer right practically every time – sun or rain, fog or no fog. These prior fitted images are pre-labeled as ‘stop’ or ‘not stop’, depending on whether it is a correct picture or not.

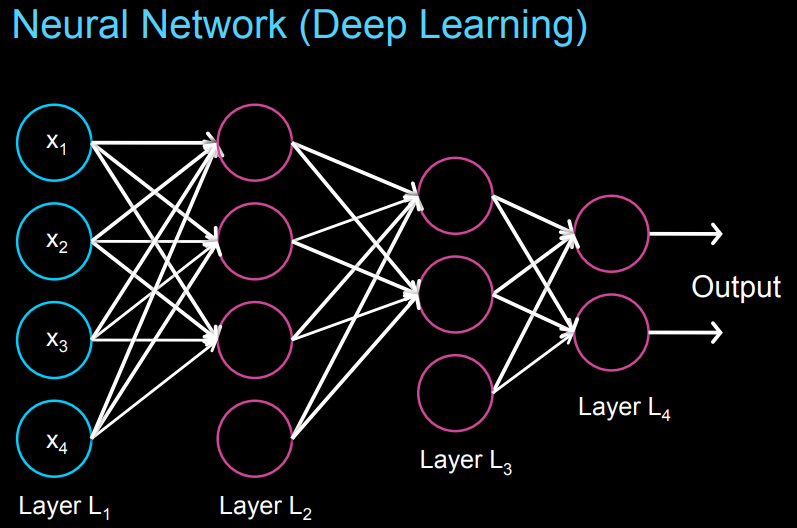

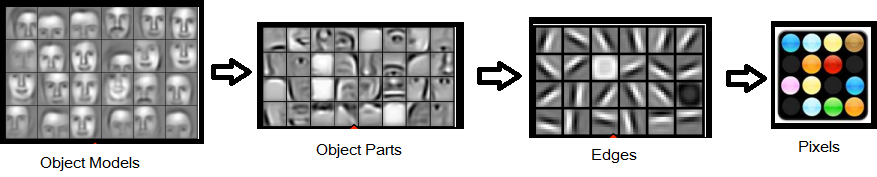

In a deep learning based framework, the attributes of a ‘stop’ sign image are chopped up and “examined” by layers forming a neural network. For example, in this case the input image is chopped into smaller attributes like its octagonal shape, its distinctive letters, its red color, its size, Cartesian coordinates, motion depicted in picture or lack of it etc.

Deep learning approaches therefore deal with much higher volumes of data than traditional ML because every input data is broken down into over a million datapoints. Whereas, traditional ML learns only through pre-programmed defined criteria

The neural network’s task is to conclude whether the input image that is being shown to it is a stop sign or not. It compares every small attribute to the exact similar attributes of historical images that it had ‘seen’ previously which are not pre-labeled as ‘stop’ or ‘not stop’ as in the case of ML.

The case of Andrew Yan-Tak Ng

Instead of a ‘stop’ sign, Andrew worked on computing the virtual recognition of a cat.

Andrew Ng (practically, the person who introduced deep learning to the world) had done this with a video of a cat back in 2012 at Google (he was previously heading Baidu AI in China). He worked with images from 10 million YouTube videos. Andrew was the propeller of Google Brain, as we know it today.

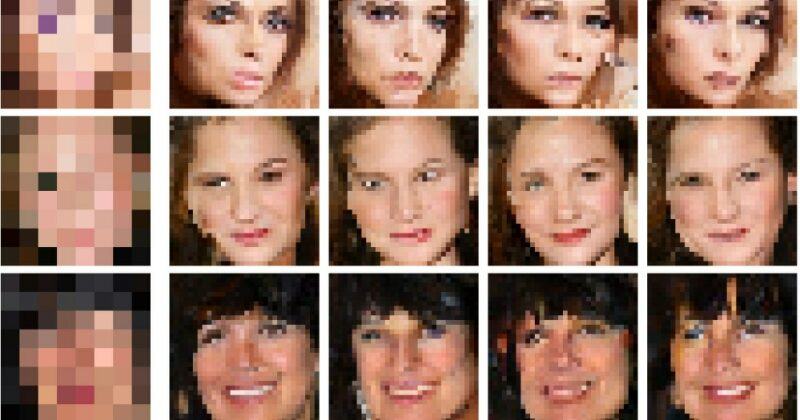

A very common application of deep learning models today is face recognition, where images are broken down to recognize the edges and then further granulated into pixels. These granulated attributes travel through various layers of the neural network to arrive at an output (& finally recognize the face) in dim light conditions or rain or fog whatever. This is achieved within seconds, as you might have seen with an iPhone.

Today, machines trained via deep learning is capable of image recognition better than humans in some scenarios which range from cats to identifying indicators for cancer in blood and tumors through MRI scans.

Top 10 Machine Learning Platforms:

- MATLAB

- IBM Watson Studio

- RapidMiner

- Google Cloud AI Platform

- Azure Machine Learning Studio

- IBM Watson Machine Learning

- Anaconda Enterprise

- IBM Decision Optimization

- Amazon SageMaker

- Big Squid

Machine Learning Jobs – Opportunities in the Industry

If you want to get a ‘great’ opportunity in Machine Learning, you need to pass out with an Engineering degree from a premier Engineering institution.

Or else, you can work in software engineering and development for a few years (say, about 4-5 years).

You may attempt to find an internship also before you look out for a full-time opportunity.

After your Master’s degree in a suitable field, you can get a good start. But in this case also, doing an internship first will help you to land up in a good job in ML.

If you plan to do a Doctoral degree first, it could be a really good idea as a Ph.D. can open up great research opportunities for you.

In most cases, at the beginning of your career, depending upon your educational qualifications as well as skills, you will start off in any one of the following or similar positions:

- Machine Learning Engineer

- Computational Scientist

- Data Scientist (ML)

- Software Development Engineer – ML

- Software Engineer (AI/ML)

- Deployment Engineer (ML)

- Payload Executive (ML)

- Research & Development Engineer

- Research Engineer

- Research Scientist

You may also get opportunities in research and teaching in some of the universities in the world. But to get an opportunity there, you must complete your MS and Ph.D.

In a University, after your Ph.D. in areas related to machine learning, you may get an opportunity as a:

- Research Associate

- Post-Doctoral Fellow

- Assistant Professor/ Similar position

Various types of companies that may recruit you:

- Internet and IT giants such as IBM, Google, Microsoft, Facebook, Amazon Services Inc., Tencent, Twitter, etc.

- Other IT companies focused on software engineering in the field of ML such as IPSoft, OpenAI, AlphaSense, AIBrain, CloudMinds, Deepmind, H20, Iris AI, Active.ai, etc.

- Companies which design and develop various microprocessors/ electronic systems / devices / applications, advanced semiconductor technologies for industrial clients or for bulk consumption such as Apple Inc., Intel, Nvidia, Qualcomm, Cisco, Samsung Electronics, Siemens, Intel, Verizon, Ericsson, Oracle, SAP, IMEC, Nokia, Symantec, etc.

- Automotive and transportation systems manufacturers (some of these are suppliers of automotive technology for the biggest car manufacturers in the world) including aerial flight systems such as Toyota, Tesla, Volvo, Autonodyne, Xevo, Nuance Automotive, Hyundai etc.

- Space research and administration organisations such as NASA, ISRO, etc.

- Defence research organizations like DRDO etc.

- FinTech – Companies which are into the BFSI industry such as insurers, consultancies, financial institutions, investment banking companies or others like Kasisto, Tesorio, Splunk, YotaScale Inc, Zestfinance, Scienaptic Systems, Underwrite.Ai, Kensho etc.

- Health Tech – companies such as MetaMind involved in deep learning networks, image recognition, text analysis, machines / systems / devices to cater to the healthcare sector.

- Technology / research divisions of consultancies like Deloitte, Goldman-Sachs, JP Morgan Chase.

Some of the top universities in the world working in the area of ML are:

- California Institute of Technology

- Carnegie Mellon University

- Columbia University

- Cornell University

- Georgia Institute of Technology

- Harvard University

- IIT Delhi

- IIT Bombay

- IIT Kharagpur

- IIT Kanpur

- IIT Madras

- IIT Patna

- IIT BHU

- IIT Hyderabad

- IIIT Hyderabad

- IIT Jodhpur

- Johns Hopkins

- MIT

- Nanyang Technological University

- Stanford University

- University of California Los Angeles

- University of California, Berkeley

- University of Illinois Urbana Champaign

- University of Pennsylvania

- University of Southern California

- University of Texas at Austin

- University of Washington Seattle

- Yale University

Prospects Overview – Machine Learning Future

Some of the prominent key growth factors that the market seems witnessing include increasing number of end users, growing research & development activities, raising usage of smart wearables / devices, widespread industrial automation.

The global market for Machine Learning which is currently estimated at US$ 2.8 billion in 2020, is forecasted to reach a revised size of US$ 27.7 Billion by 2027, growing at a compound annual growth rate (CAGR) of 38.4%.

Amid the COVID-19 crisis, the global market for Deep Learning which is currently estimated at US$ 4.4 billion in 2020, is anticipated to reach an improved size of US$44.3 billion by 2027, growing at a rate of 39.2%.

America accounts for more than 30.2% of the current global market size in 2020, whereas China is expected to grow at a CAGR of 38% during by 2027.

The global demand for ML & Robotics systems is anticipated to be driven by the massive investments made by countries such as China, US, Russia, Israel in the development of next generation systems and the large scale procurement of such products by countries like Saudi Arabia, Japan, India, and South Korea.

The global Defense ML & Robotics, is valued at US$ 39.22 billion in 2018, is projected to grow at a rate of 5.04% compounded annually, to value US$ 61 billion by 2027. The cumulative market for global expenditure in this segment is valued at US$ 487 billion over 2018 to 2027.

The United States is the largest spender in the domain with China, Russia, Japan, India, Saudi Arabia, and South Korea anticipated accounting for the bulk of spending.

Machine Learning – Useful Links

- What is Machine Learning? – IBM Explains

- Machine Learning News – MIT (Massachusetts)

- Latest Research in Machine Learning

- Join the Titanic Competition : Machine Learning from Disaster – Kaggle

- Stanford ONLINE – Courses & Programs

- Most basic Python machine learning algorithm – ‘K-Nearest Neighbors (KNN)’

- Practice your own approach to ML (in this IDE)

Two Excellent Book Companions

- Introduction to Statistical Learning, also available for Free online

- Hands-On Machine Learning with Scikit-Learn and TensorFlow

Must Read

- 51 Top Universities in India

- Career in Artificial Intelligence

- Mastering Data Science

- 10 new-age Careers in Technology

- The bachelor of engineering degrees

- Choosing an alternative career plan

Machine Learning – Conclusions

Do you find yourself to be a technology enthusiast constantly bugged by the question, ‘what is machine learning’? Well, we hope we’ve served you most of the answer and fuelled your thoughts about machine learning courses, machine learning jobs, python machine learning, machine learning vs. deep learning and machine learning future. ML roles are undoubtedly the most sought-after jobs in the industry today. Whether you’ve already planned a career in it or just starting to dip your toes in the thoughts of it, allow us to make it a bit easier for you. Talk to an expert today to figure out more of what you must know before you begin your journey.

With a Master’s in Biophysics-Biostatistics, Sreenanda acquired professional experience in computational proteomics of human molecules. She is currently working with the Research and Data Team at iDreamCareer.

Excellent Blog! I would like to thank for the efforts you have made in writing this post. I am hoping the same best work from you in the future as well. I wanted to thank you for this website! Thanks for sharing. & Great post!

Thank you, Hemang. Great you found it useful!

Great great things explained, very good examples n pictures

Must congratulate the author for having made such stupendous efforts in writing this particular blog. Such posts are rare from India.

I am a Ph.D. Student at JNCASR and I found this blog post very useful for my brother, he’s in class9 now.

Hello. fantastic job. This is a remarkable story. Thanks!

This is a massive and a very interesting post

Hey, thank you for sharing this information.